AI SRE applies machine learning and large language models to reliability workflows so teams can assemble evidence, form testable hypotheses, and execute safer mitigations faster while humans remain accountable.

AI SRE is not a new label for automation, observability, or alert correlation. It is the concept of running incident response with an AI layer that is constrained by evidence, verification, and governance. When this works, responders stop spending the first 20 minutes hunting for context across dashboards, deploy timelines, tickets, and tribal knowledge. They start with a coherent incident narrative and a short list of next checks that are safe to run.

The difference is the unit of value. Traditional reliability tooling optimizes for signals. AI SRE optimizes for decisions and execution under control. That shift changes what “good” looks like: fewer competing stories, faster ownership alignment, fewer dead-end investigations, and less manual glue work between tools.

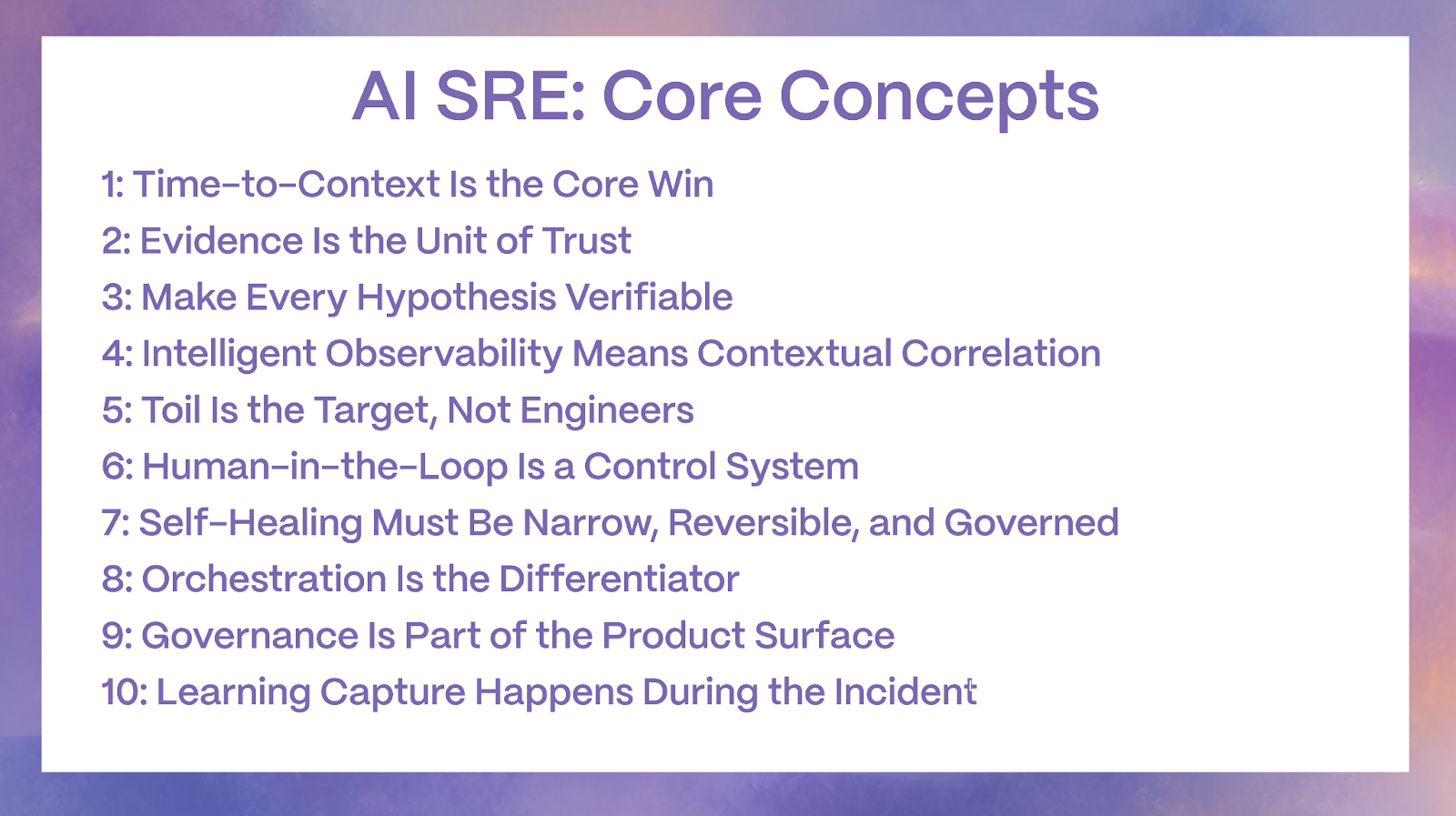

Key Takeaways

- AI SRE targets time-to-context first because ambiguity, not typing, slows incidents down.

- The goal is toil reduction and safer decisions, not replacing engineers.

- Intelligent observability means contextual correlation and change awareness, not more alerts.

- Self-healing only works when actions are bounded, reversible, and governed.

- Human-in-the-loop is a system design pattern enforced by RBAC, approvals, and audit trails.

Concept 1: Time-to-Context Is the Core Win

Time-to-context is the time it takes to answer four questions with confidence: what is failing, what changed, what is impacted, and what to verify next. This is where incidents stall, especially in multi-service environments where symptoms propagate and dashboards disagree.

AI SRE treats time-to-context as a first-class metric because it compounds. When responders get a credible narrative early, the rest of the incident becomes simpler: paging is cleaner, hypotheses are sharper, mitigations are safer, and communications are more consistent.

What this concept changes in practice:

- Fewer parallel investigations that duplicate effort

- Faster convergence on the highest-signal evidence

- Earlier identification of the smallest safe mitigation path

Concept 2: Evidence Is the Unit of Trust

In AI SRE, trust is not earned through fluency. It is earned through an evidence trail that a responder can verify quickly. The system should behave like a rigorous investigator: it cites artifacts, points to time windows, and shows why a hypothesis is ranked highly.

A reliable evidence model separates:

- Observed facts from telemetry and change records

- Inferences that connect those facts into hypotheses

- Next checks that can confirm or falsify the hypotheses

- Proposed actions that follow successful verification

How evidence should look operationally:

- Each claim maps to a specific log line cluster, metric chart window, trace pattern, deploy event, or config change

- The incident record captures these artifacts so verification does not require context hunting

- If evidence is missing, the system surfaces that gap instead of smoothing it over

Concept 3: Make Every Hypothesis Verifiable

Root cause analysis in the first 30 minutes is rarely a single answer. It is a ranked list of plausible causes with a plan to narrow uncertainty fast. AI SRE concepts force RCA to behave like a hypothesis loop rather than a narrative generator.

High-quality hypothesis output includes:

- Most likely hypothesis with linked evidence

- Competing hypotheses with differentiating signals

- A shortest safe test that changes confidence quickly

- A clear statement of what would disprove the hypothesis

Why testability matters:

- It prevents “confidence drift” where the team aligns around an unverified story

- It makes verification a default behavior rather than a heroic effort

- It creates a traceable decision chain for post-incident review

Concept 4: Intelligent Observability Means Contextual Correlation

Observability provides visibility. Intelligent observability provides incident context. The concept shift is from isolated telemetry to telemetry interpreted within service topology, change events, and operational history.

Intelligent observability focuses on:

- Clustering many alerts into a single incident signature

- Correlating symptoms across services and layers

- Prioritizing signals by blast radius and customer impact

- Explaining correlation in plain operational terms rather than statistical output

Change awareness is a defining trait:

- Deploys, feature flags, and config changes are treated as first-class signals

- The system defaults to “what changed in the blast radius” as early evidence

- Incident narratives are anchored to a timeline rather than a dashboard snapshot

Concept 5: Toil Is the Target, Not Engineers

Toil is repetitive, interrupt-driven, low-leverage operational work that should be eliminated over time. AI SRE is most valuable when it automates the glue work that happens in every incident, not when it tries to “think like a senior engineer.”

High-impact toil buckets AI SRE should reduce:

- Alert intake and deduplication

- Ownership discovery and routing

- Evidence gathering and attachment to the incident record

- Timeline assembly from real events

- Drafting stakeholder updates in a consistent format

- Generating first-pass postmortem artifacts with evidence links

The concept that matters:

- AI SRE should remove manual work that never should have been manual

- It should not shift accountability away from engineers

- It should increase the amount of time engineers can spend on prevention

Concept 6: Human-in-the-Loop Is a Control System

Human-in-the-loop is not a disclaimer. It is a control system: decision ownership, verification gates, and enforced constraints that keep production safe under pressure.

Human-in-the-loop works when:

- Incident roles remain explicit, especially decision authority

- Approvals are enforced by workflow, not policy memory

- Recommendations always include verification steps

- Execution privileges are scoped, time-bounded, and auditable

A practical autonomy ladder:

- Assist: summarize, correlate, draft hypotheses

- Recommend: propose next best checks and mitigations with evidence

- Approve: require explicit confirmation for high-impact steps

- Execute: perform bounded actions through trusted automation rails

- Learn: incorporate outcomes into future ranking and retrieval

Concept 7: Self-Healing Must Be Narrow, Reversible, and Governed

Self-healing is a maturity outcome, not a starting point. The concept is not “automatic fixes.” The concept is “bounded, reversible actions that reduce harm and stop safely if conditions are not met.”

Safe auto-remediation candidates share four properties:

- Bounded: limited blast radius and scope

- Reversible: fast rollback or automatic rollback path

- Measurable: clear success signals and stop conditions

- Governed: RBAC, approval rules, and full audit trails

Examples that usually fit the concept:

- Restarting a single stateless instance with rate limits

- Scaling within pre-approved limits

- Shifting traffic away from a degraded region

- Rolling back a feature flag with health gating

- Running a known-safe runbook step with explicit stop conditions

What does not fit:

- Broad, irreversible, or high-risk changes that touch data integrity, security posture, or global network policy

Concept 8: Orchestration Is the Differentiator

The model is not the product. The product is the workflow that sequences investigation and enforces control. Orchestration determines what the system can do, when it can do it, what it must show, and what requires approval.

Effective orchestration patterns:

- Gather symptoms and scope from telemetry

- Pull recent changes in the affected blast radius

- Map dependencies and likely propagation paths

- Rank hypotheses with evidence and tests

- Propose reversible mitigations first

- Capture everything into the incident record automatically

Orchestration also prevents secondary incidents:

- Tool allowlists prevent unbounded querying

- Rate limits prevent query storms during outages

- Escalation triggers ensure humans stay in control when uncertainty is high

Concept 9: Governance Is Part of the Product Surface

AI SRE fails in real organizations when governance is bolted on later. The concept is governance-by-design: controls that align with how production systems are already managed.

Core governance primitives:

- Least privilege RBAC and scoped permissions

- Time-bounded credentials for incident-time access

- Audit logs that capture evidence retrieval, proposals, approvals, and execution

- Change control alignment with existing deployment and flag governance

- Policy-as-code for automated actions

- Stop conditions and rollback policies for any execution

The practical outcome:

- Security and compliance reviews become accelerators rather than blockers because the system behaves like a disciplined operator, not an unbounded assistant

Concept 10: Learning Capture Happens During the Incident

AI SRE should improve prevention by capturing the incident story as it unfolds. The concept is real-time operational memory: evidence and decisions recorded while they are true, not reconstructed later.

Learning capture includes:

- Live timeline assembly with linked artifacts

- Structured summaries of “what changed” and “what was impacted”

- Action tracking: what was tried and the observed effect

- Postmortem-ready sections that reduce manual reconstruction

- Tagging patterns so future incidents retrieve relevant history faster

Why this matters:

- Better timelines lead to better prevention

- Repeat incidents drop when learning is reusable and queryable

- The organization’s reliability maturity improves without adding meeting load

What “Good” AI SRE Looks Like in Practice

AI SRE Concepts ultimately point to one outcome: incident response becomes calmer, faster, and more repeatable because the system reduces ambiguity without weakening control. When the concepts are implemented correctly, responders spend less time assembling a picture of reality and more time verifying the highest-signal hypotheses and executing the safest mitigation path. The operating model shifts from reactive scrambling to disciplined, evidence-first execution.

The easiest way to recognize a mature AI SRE program is by what it does not do. It does not flood teams with more alerts, more channels, or more noisy recommendations. It does not force responders to trust fluent summaries without receipts. It does not treat automation as a flex. Instead, it compresses the early incident phase by delivering a coherent narrative quickly, then it protects the rest of the lifecycle with verification loops, explicit role ownership, and governance that matches the risk level of each action.

In practical terms, strong AI SRE programs follow a predictable arc. They start with read-only assistance that improves time-to-context, consolidates signals, and captures an evidence trail automatically. They expand into assisted actions only when approvals, RBAC, and auditability are real and proven in production incidents. They reserve self-healing for narrow, reversible runbooks with clear stop conditions and rollback paths. Each step increases capability without increasing exposure.

At Rootly, we help SRE and platform teams operationalize these concepts inside the incident workflow, so AI assistance stays evidence-driven, verifiable, and governed. If you want to see how this fits into your current incident process, data sources, and approval model, book a demo and we will walk through a practical rollout path tailored to your environment.