Over the past year, more than 46 companies started offering what they call an “AI SRE.”

The name is enticing, sure. But it’s also confusing. Most of these vendors say they’ll replace your “most experienced SRE” with AI. That really makes you wonder if they’ve ever had a knowledgeable SRE before them, but that’s beyond the point.

As the old adage says, naming is hard. And “AI SRE” is how the industry has decided to name solutions that correlate logs, metrics, and traces to automate root cause analysis and recommend (and even execute) actions to resolve incidents, when possible.

This concept is not new. Our very own Head of Rootly AI Labs, Sylvain Kalache, has held a patent on self-healing systems for over 10 years, from the time he worked as an SRE at LinkedIn (who uses Rootly for incident management).

I’ve been heads down into the AI SRE space since the concept started circling around. In this article, I want to break down everything I’ve found (capabilities, under-the-hood mechanics, adoption patterns, etc). I’ll keep updating this article as my understanding evolves and as we learn more about AI SRE.

Key Takeaways

- AI SRE applies artificial intelligence to incident operations by detecting, diagnosing, and resolving failures with less human toil through correlation, modeling, and automated recommendations.

- Traditional SRE models are hitting scale limits as distributed architectures, alert overload, and fragmented knowledge make human-only incident response slower, costlier, and harder to maintain.

- LLMs unlocked machine understanding of the incident domain by interpreting logs, diffs, runbooks, and postmortems as semantic inputs that can support hypothesis generation and summarization.

- Adoption follows a maturity curve instead of a single switch beginning with read-only insights, then advised actions, then approval-based remediation, and finally autonomous operation with guardrails.

- AI SRE success is measured through multiple metric categories including technical reliability metrics, on-call productivity metrics, and business metrics such as revenue protection and customer satisfaction.

What is AI SRE?

We’ve lived through traditional SRE workflows for decades, we know how it works. You check your dashboards, you jump when you get alerts, and your start formulating hypothesis on what could’ve gone wrong.

Incident response has historically been human-driven and reactive, requiring engineers to triage alerts, reconstruct context, escalate across teams, and mitigate under time pressure. AI SRE introduces assistance into that loop, transforming the reliability pipeline from manual investigation to predictive and assisted remediation.

Other concepts to contrast AI SRE with:

- AIOps: by optimizing for reliability outcomes (MTTR, MTTD, change fail rate) rather than reducing generic operations noise.

- Traditional SRE: by shifting from reactive fire-fighting to machine-assisted diagnosis and remediation orchestration.

- Observability tools: by acting as a decision layer that sits on top of telemetry rather than merely collecting or visualizing it.

Why now?

In my opinion, there are two converging forces that made AI SREs emerge as a force now:

- Self-healing systems have been around for decades. But the recent evolution of LLMs and ML capabilities, made it possible to process logs, metrics, traces, runbooks, code diffs, deployment metadata, and postmortems at an unprecedented scale. Once models could read and reason across these artifacts, the reliability surface became machine-explorable for the first time, enabling workflows traditionally reserved for senior incident commanders and domain experts.

- The systems our modern world has come to depend on are extraordinarily complex. Every month, you’ll find news on cancelled flights, or hospitals with booking issues, or an electric car stuck in a basement. It’s not that these systems are not resilient, they are. But we need more tools to support the rise in complexity. The advent of vibe-coding is also a scary trumpet for SREs, and they’ll be to be ready to respond in kind.

Why Traditional SRE Is Breaking

Modern production infrastructure has outpaced the cognitive and operational bandwidth of human-only SRE models. Multi-cloud, serverless, edge compute, ephemeral environments, and microservices introduced a combinatorial surface of failure that cannot be reasoned about through dashboards and Slack alone. The result is an industry-wide reliability bottleneck that shows up in metrics, headcount, cost, morale, and customer experience.

Three Compounding Failure Modes

1. Scale, Fragmentation, and Dependency Chains

Applications decomposed into hundreds of microservices, queues, proxies, and data stores. SLIs and SLOs became distributed across teams and failure domains, requiring responders to reconstruct causal graphs under pressure. Latency amplification, cascading failures, and circuit-breaker flapping became harder to diagnose without machine correlation across logs, metrics, traces, and deployment metadata.

Industry signal: Fortune 500 cloud-native teams report thousands of components per environment, with incident bridges now spanning 6–20+ functional owners during SEV0–1 events.

2. Alert Fatigue, Cognitive Load, and On-Call Toil

Even mature orgs drown in noisy alerts. Golden signals like latency, errors, saturation, and traffic trigger faster than humans can triage. Repetitive playbooks, context switching, and 2 a.m. wake-ups contribute to burnout and responder churn.

Known pattern: Google’s SRE books and multiple surveys attribute rising on-call burnout to alert noise, context fragmentation, and operational toil.

3. Knowledge Distribution and the Temporal Gap

Incident resolution depends on tribal knowledge encoded in Slack, tickets, runbooks, code comments, and past postmortems. The engineer with the answer may not be in the bridge, or may be asleep due to time zone rotation. Crisis memory also decays — past SEVs become difficult to learn from without structured extraction.

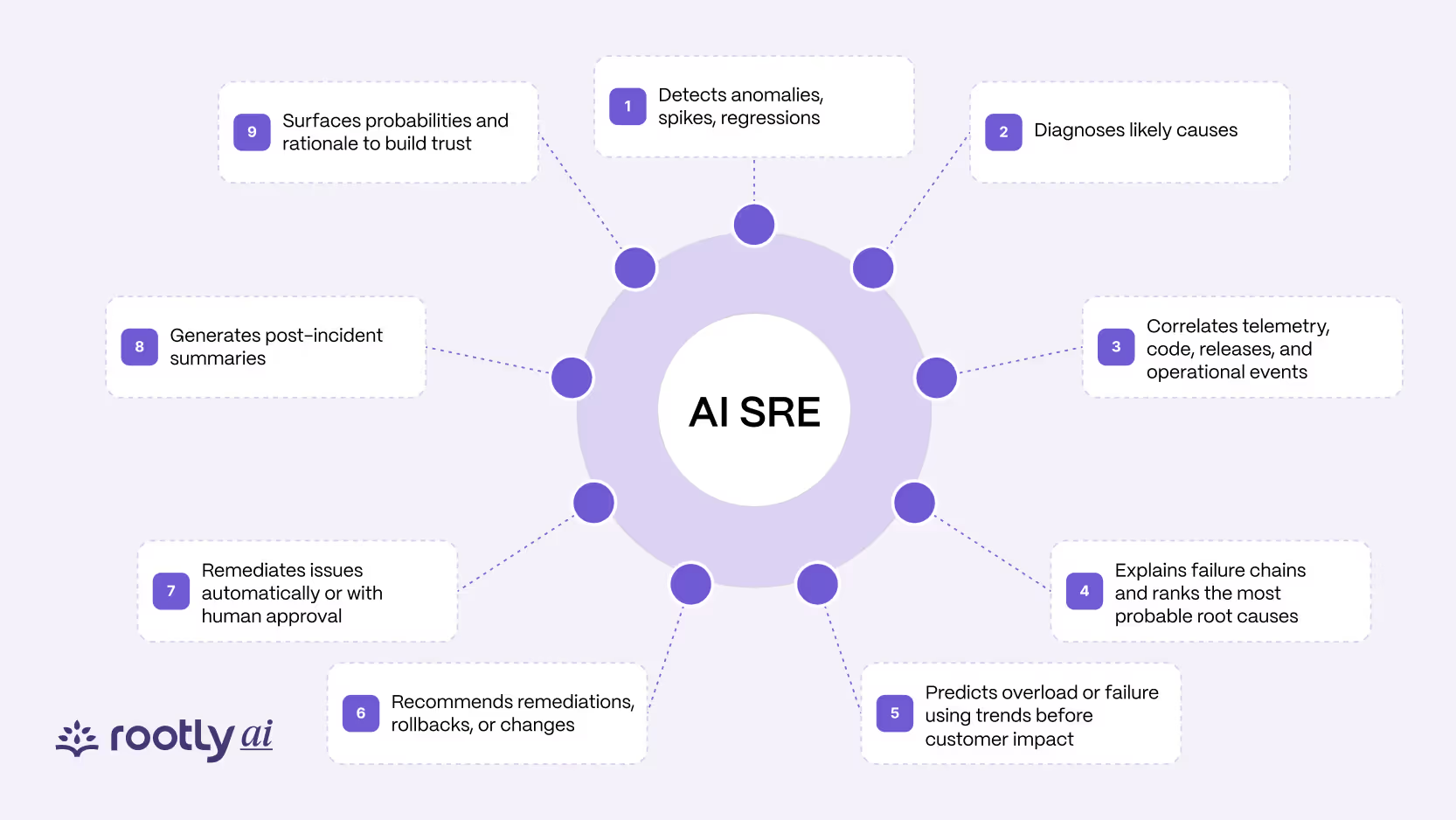

Core Capabilities of AI SRE Systems

AI SRE platforms expand what it means to operate production by executing capabilities that are traditionally spread across many tools and humans.

The core capability surface includes:

- Detection - Identifies anomalies, spikes, regressions, and patterns across logs, traces, and metrics. It filters noise so only signals relevant to reliability surface to the responder.

- Diagnosis - Correlates symptoms, dependencies, deploys, configs, and past incidents to infer likely causes. This shortens the investigation window that normally requires manual cross-system digging.

- Correlation - Connects telemetry, code, releases, and events that would not normally appear related. It builds a semantic map of how symptoms propagate through distributed systems.

- Causality - Ranks probable root causes and explains the chain of conditions that produced the failure.This allows responders to distinguish between primary causes and secondary effects.

- Prediction - Forecasts overload, saturation, or failure based on trending signals rather than thresholds.It gives teams time to intervene before an incident crosses a customer-impact boundary.

- Recommendation - Suggests remediations, rollbacks, scaling operations, or configuration changes based on learned behavior.These recommendations come with rationale so engineers understand why an action is appropriate.

- Remediation - Executes actions automatically or semi-automatically depending on risk posture. Human approval can be required for high-impact systems while low-risk operations run autonomously.

- Reporting - Generates post-incident summaries and impact narratives for leaders and customers. It adapts communication style to the audience, reducing manual drafting and coordination work.

- Confidence Scoring - Surfaces hypotheses, rationales, and probabilities so humans understand why an action is suggested. This transparency is foundational for trust, especially in early phases of adoption.

This topology creates a reliability feedback loop that turns raw telemetry into operational outcomes rather than dashboards.

How LLMs Power Incident Operations

LLMs accelerated AI SRE adoption by making the incident domain legible to machines. They perform tasks that historically required senior SREs with deep system context:

- Semantic Log Interpretation — Parses logs, traces, stack traces, and build artifacts as language with structure, intent, and causality rather than opaque strings.

- Hypothesis Generation — Produces cause candidates and failure theories based on evidence. Example:

“Payment latency likely caused by Catalog deploy at 14:03 UTC (confidence 0.74).”

- Runbook Reasoning — Reads runbooks, annotates steps, checks prerequisites, and determines which actions are safe to automate.

- Change Impact Analysis — Evaluates diffs, config mutations, schema migrations, feature flag changes, and infra drift for regressions.

- Incident Similarity Matching — Uses embeddings to retrieve past SEVs, tickets, and postmortems that match current failure signatures.

- Knowledge Graph Construction — Converts incident artifacts into a graph of services, dependencies, owners, and historical outcomes so the system can learn over time.

- Post-Incident Summaries — Produces technical, managerial, and customer-facing narratives automatically, reducing the cost of documentation and coordination.

For SRE practitioners, this eliminates the slowest part of incident handling: context assembly.

Under-the-Hood Mechanics

Modern AI SRE systems typically combine:

- LLMs for reasoning & explanation

- Embeddings for similarity search across incidents, runbooks, and postmortems

- Knowledge Graphs for causal topology + ownership

- Telemetry Pipelines for observability signals

- Workflow Engines for remediation and orchestration

These elements lets AI answer questions that previously required tribal knowledge, such as:

“Have we seen this before?”

“What changed recently?”

“What is the root cause?”

“What should we do?”

Human in the Loop Reliability Model

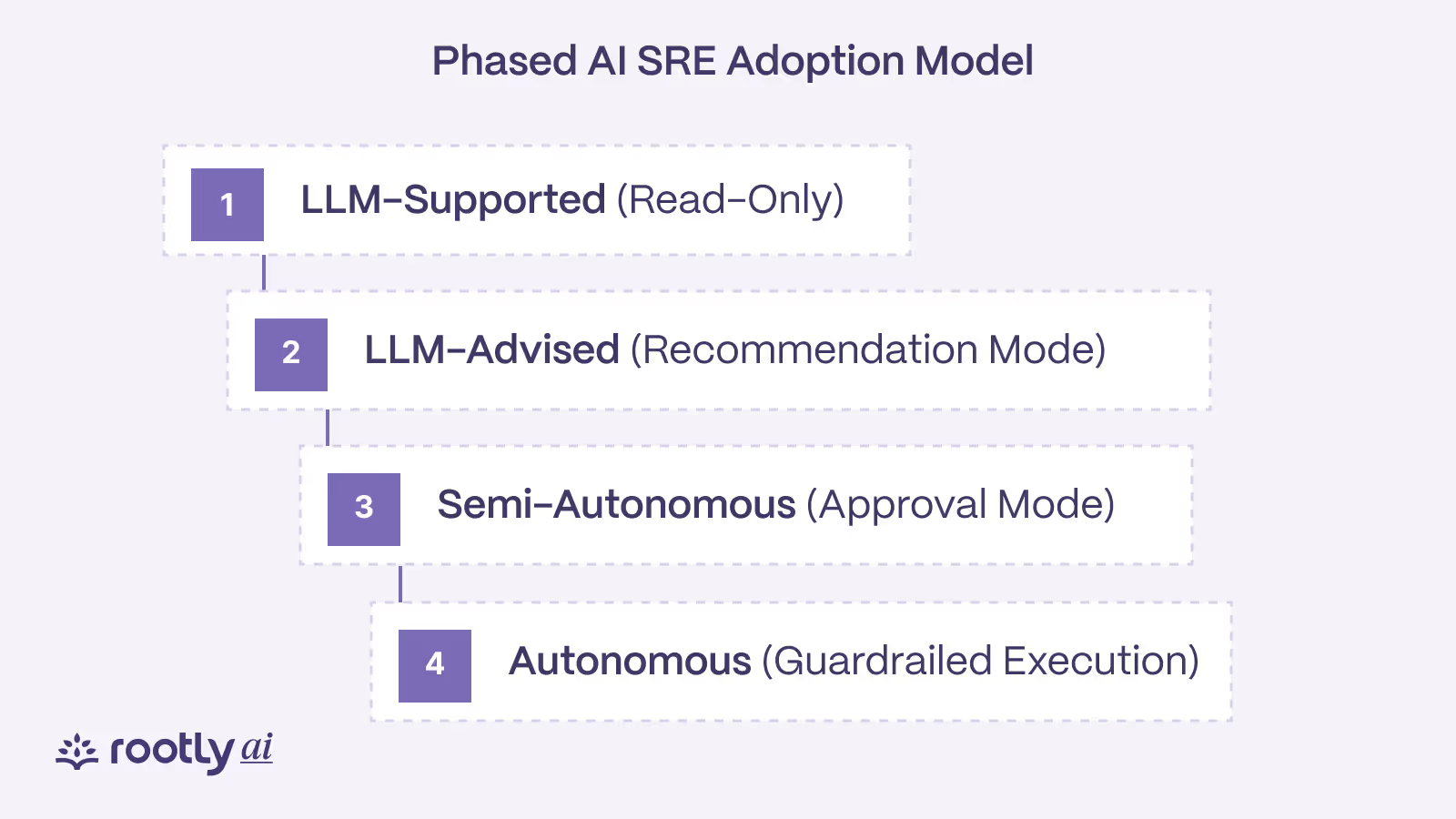

Production environments still require human authority for critical actions. AI SRE introduces graded autonomy rather than a binary on/off automation model. We see four maturity stages in the field:

- Read-Only — AI observes, correlates, summarizes, and explains.

- Advised — AI recommends actions and escalation paths.

- Approved — AI executes actions contingent on human approval.

- Autonomous — AI executes bounded remediation automatically with guardrails.

Maturity is driven by:

- model performance

- change risk

- compliance and audit requirements

- cultural trust\

- user experience and explainability

Enterprises rarely jump directly to autonomous remediation; they progress through trust-building cycles during SEV handling.

The 2:47 AM Test: Where AI SRE Shines

As GitHub Staff SWE Sean Goedecke says, when you get paged in the middle of the night, you are far from your peak performance. You are more of a “tired, confused, and vaguely panicky engineer.” The 2:47 AM Test illustrates when AI SRE delivers disproportionate value — during high-stakes, low-context incidents where customer impact compounds quickly and cognitive load is high.

The Scenario

It is 2:47 AM on a weekday. An e-commerce platform begins showing intermittent checkout failures. Dashboards indicate elevated error rates in the payment service, but the causal chain is unclear. The on-call engineer, already focused on a separate database issue, is paged and must reconstruct context under time pressure.

Traditional Response (Human-Only)

In a human-only workflow, the responder must manually assemble context across multiple systems:

- review logs, metrics, and traces

- inspect deploy history and feature flags

- check upstream/downstream dependencies

- validate infrastructure health

- escalate across teams for tribal knowledge

In parallel, noisy alerts and false positives expand the decision surface. The responder must not only find the real failure but also suppress misleading signals emitted from unrelated components. This increases cognitive load and elongates the investigative window.

The majority of this work is operational toil, and its reduction is limited by how quickly a human can gather, correlate, and interpret clues from distributed systems. This toil reduction problem compounds as microservices and ownership boundaries multiply.

This investigation loop often consumes 30–60 minutes of Mean-Time-to-Detect (MTTD) before mitigation begins. During that window:

- error budgets burn

- SLOs are breached

- revenue-per-minute deteriorates

- customer churn increases

Meanwhile, the interruption cost of waking an engineer at 2:47 AM introduces fatigue, context switching, and degraded judgment.

Once detection is complete, another manual escalation chain begins to reduce Mean-Time-to-Recovery (MTTR), often involving multiple teams and domain experts.

AI SRE Response (Machine-Assisted)

An AI SRE platform initiates parallel investigations immediately:

- Service Analysis → deploys, config changes, error signatures

- Dependency Mapping → upstream/downstream anomalies and call graphs

- Infrastructure Review → saturation, latency, network, resource exhaustion

- Historical Matching → similarity search over embeddings + past SEVs

- Change Attribution → correlates failure onset with code/config/flag changes

Because the platform suppresses false positives through correlation, responders only see reliability-relevant signals.

Within minutes, the platform infers that:

- a configuration change increased DB connection timeouts

- a marketing campaign elevated checkout traffic

- together they exhausted the connection pool

It then generates an incident narrative with:

- likely root cause (with confidence score)

- affected services and business flows

- SLO and customer impact

- recommended remediation paths

Because the platform performs the investigative portion automatically, responders engage only for approvals and business trade-offs, with substantial toil reduction and reduced cognitive load.

Scenario → Value → Outcome

The 2:47 AM Test consistently demonstrates measurable reliability outcomes:

Some vendors refer to the automated remediation interval as MTTR-A (Automated MTTR) — where machines execute playbooks within defined guardrails.

Generalized Pattern

When cognitive load is high and customer impact is rising, AI SRE collapses both MTTD and MTTR while suppressing false positives, reducing toil, and minimizing interruption cost.

This pattern emerges consistently during:

- after-hours on-call rotations

- SEV0–SEV2 incidents

- distributed system regressions

- multi-team escalation chains

- pager storms

- weekend/holiday load spikes

In all cases, the binding constraint is human cognitive bandwidth, not observability data.

Implementation Strategies and Best Practices

Organizations do not move directly from zero automation to autonomous remediation. Successful adoption of AI SRE follows a staged approach that builds trust, reduces risk, and aligns automation with existing incident management norms.

Adopt a Phased Maturity Model

Most teams progress through four maturity stages:

1. LLM-Supported (Read-Only)

The system observes incidents, correlates telemetry, and suggests actions. Humans remain fully in control.

2. LLM-Advised (Recommendation Mode)

The system proposes remediations with rationale and confidence scores. Engineers validate alignment with real-world operational behavior.

3. Semi-Autonomous (Approval Mode)

The system executes actions after human approval. Reversible, low-risk actions become automated.

4. Autonomous (Guardrailed Execution)

The system executes bounded actions automatically within defined guardrails. Higher-risk systems continue to require approval.

Enterprises rarely skip stages; the curve is governed by trust, observability quality, and risk posture.

Start in Read-Only Mode

Begin by allowing the platform to observe incidents, analyze patterns, and recommend actions without execution. This builds operator confidence and creates a shared frame of reference for what “good” remediation looks like.

Engineers can compare recommendations to what they would have done manually, which surfaces gaps in reasoning, runbooks, or data.

Automate Low-Risk Actions First

Once recommendations consistently align with operator behavior, organizations typically automate low-risk, reversible actions such as:

- scaling internal services

- restarting non-critical components

- clearing caches or queues

- rotating credentials

- toggling feature flags

Higher-risk flows (payments, identity, trading, government workloads) continue to require explicit approval. Risk and reversibility, not feature completeness, determine automation priority.

Establish Clear Guardrails and Boundaries

Guardrails define the operational perimeter of automation. They typically include:

- approval flows (who approves what and when)

- rollback criteria (when to revert or abort)

- blast radius limits (service / region / tenant)

- policy-as-code (enforced in CI/CD or orchestration)

- RACI for AI actions (responsible, accountable, consulted, informed)

- audit and observability paths (record of what executed and why)

Guardrails maintain safety without stalling adoption.

Build Human-in-the-Loop Feedback Loops

Human adjudication is critical during early phases. Acceptance, rejection, or modification of AI-generated actions becomes feedback that tunes future behavior. Over time, the system learns organizational preferences such as:

- when to roll back vs roll forward

- which mitigations are reversible

- which teams own specific services

- how SLO priorities map to business impact

This feedback loop shifts the system from generic reasoning to organization-specific reasoning.

Integrate With Existing Operational Workflows

AI SRE should extend the existing operational ecosystem rather than replace it. Integration points typically include:

- on-call scheduling systems

- incident management platforms

- observability stacks

- change management systems

- communication channels (Slack, Teams, Email)

- paging/alerting infrastructure

Fitting into familiar workflows reduces cognitive friction and accelerates adoption.

Ensure Data Integration Across Observability Surfaces

Effective AI SRE depends on broad and consistent signal access, including:

- logs

- metrics

- traces

- deployment metadata

- config changes

- code diffs

- feature flags

- runbooks

- postmortems

- ownership metadata

- service topology

Without these surfaces, the system cannot construct accurate causal graphs or propose safe remediations.

Address Organizational Adoption Blockers

Common blockers observed across enterprises include:

- data silos (telemetry trapped in unintegrated systems)

- inconsistent observability (partial logs, missing traces)

- lack of ownership clarity (who approves what)

- fear of automation (risk aversion in critical flows)

- training gaps (operators unfamiliar with AI-generated actions)

- governance policy gaps (no automation RACI or blast radius model)

These are cultural and structural issues, not technical limitations.

Align Automation with Business and Risk Posture

Not all organizations share the same tolerance for automation. For example:

- fintech prioritizes audit and reversibility

- e-commerce prioritizes latency and revenue continuity

- SaaS prioritizes SLO burn rate

- gaming prioritizes concurrency and player experience

The strategy should match the business domain, not a generic automation model.

Current Limitations and Considerations

Despite strong results in incident operations, AI SRE systems have constraints and require sound operational judgment. The following limitations shape adoption timelines, automation scope, and trust in the system.

Context and Domain Understanding

AI platforms may lack full business context. A slowdown during a maintenance window may not be urgent, while a minor latency regression during peak purchasing hours may have revenue impact. Business calendars, user cohorts, SLAs/SLOs, regulatory windows, and contractual obligations are not always encoded in telemetry.

Hallucination and Nondeterministic Output

LLMs can produce hallucinated or overconfident explanations, particularly when telemetry is sparse or ambiguous. Outputs may be nondeterministic, meaning the same input can yield different reasoning paths, requiring operators to verify hypotheses before taking action.

Distributed System Complexity

Modern infrastructure contains edge cases, partial failures, and emergent behaviors that are difficult to model. Rare interactions may produce failures that neither traditional tools nor AI systems can immediately diagnose, especially in environments with limited observability or non-standard protocols.

Automation Risk Profile

Remediation without human oversight carries business and compliance risk. Critical paths (payments, trading, authentication, government workloads) typically retain approval gates until telemetry quality, guardrails, and trust mature. Organizations must consider blast radius, reversibility, rollback capability, and auditability before autonomous execution.

Auditability, Traceability, and Governance

Enterprises require visibility into what executed, why, and under what conditions. Without audit logs, reasoning traces, and decision artifacts, automated remediation becomes difficult to govern. Regulated industries often require:

- trace logs of decisions

- approval workflows

- rollback history

- policy-as-code enforcement

- risk scoring models

These requirements are not optional in financial, healthcare, or government environments.

Integration Overhead and Data Dependencies

Effective AI SRE relies on access to diverse telemetry surfaces:

- Logs

- traces

- metrics

- deployment metadata

- config changes

- code diffs

- feature flags

- runbooks

- postmortems

If observability is inconsistent or siloed, diagnosis and remediation quality degrades. Upfront engineering work is required to stitch these surfaces together across tooling ecosystems.

Security, Trust Boundaries, and Data Privacy

Incident artifacts may contain sensitive customer data, infrastructure details, internal topology, or operational secrets. Organizations must consider:

- trust boundaries between components

- data residency constraints

- cross-tenant isolation

- PII and PHI leakage

- training data exposure

- inference sandboxing

Security teams often gate adoption until boundaries are clear.

Model Accuracy and Evaluation

Root cause analysis (RCA) accuracy and remediation recommendation quality vary by domain, system maturity, telemetry density, and incident type. RCA expectations should be calibrated against baselines such as “faster triage” rather than “infallible diagnosis.”

Cost Modeling for Inference Workloads

Inference workloads (particularly those requiring continuous streaming analysis) introduce new cost curves tied to model size, data volume, and concurrency. Platform teams must evaluate:

- steady-state inference cost

- peak incident inference cost

- retrieval/storage overhead

- fine-tuning and training cycles

Cost efficiency varies significantly across vendors and architectures.

Training Data and Label Scarcity

Most organizations lack large, consistently labeled incident datasets. Runbooks, tickets, chat threads, and postmortems often exist but are unstructured. This limits supervised learning and requires heavy use of embeddings, retrieval, or heuristic patterning to compensate.

Compliance and Regulatory Constraints

Industries with strict regulatory mandates (e.g., finance, healthcare, energy, government) have constraints on automation, record retention, explainability, and human oversight. These constraints shape which portions of incident handling can be delegated to AI.

Organizational and Role Impacts

AI SRE does not replace SREs. It changes what SREs spend time on.

We observe three outcomes across teams piloting AI SRE:

- Lower Toil and Cognitive Overhead - Repetitive investigations, triage, and summarization are reduced or eliminated.

- Shift From Firefighting to Engineering - Teams invest more time on resilience work, architectural remediation, and developer enablement.

- Redistribution of Knowledge - Incident knowledge moves from individual heads to a shared system that can recall it on demand.

To CTOs, this translates to increased engineering leverage rather than staff replacement.

Common AI SRE Use Cases

Enterprises adopt AI SRE to address categories of operational pain:

- accelerated root cause analysis

- predictive overload detection

- dynamic scaling and resource tuning

- business aware alerting and prioritization

- automatic rollback or throttling

- dependency graph and blast radius mapping

- incident summarization and postmortem drafting

- on call load reduction

- resilience validation in pre production

Each use case aligns to a measurable metric (detection, diagnosis, remediation, or recovery).

Implementation and Buying Considerations

Rolling out AI SRE is not a feature toggle. The most successful implementations follow a deliberate path:

Identify Pain Concentrations - Noisy alerts, brittle dependencies, and after hours pages deliver the fastest ROI.

Pilot in Low Risk Domains - Non critical or internal workflows allow models to build trust without business exposure.

Integrate Data Sources Early - Telemetry quality dictates model output quality.

Define Automation Policies - Human in the loop rules, approvals, and rollbacks form part of the reliability contract.

Evaluate Vendors By Capability -

Teams compare platforms by:

- observability coverage

- dependency modeling

- remediation surface

- LLM quality and interpretability

- knowledge retention

- integration flexibility

- confidence scoring

- security and compliance posture

Buying decisions are less about hype and more about operational fit.

Economic Outcomes and Reliability Metrics

AI SRE success is not measured only in uptime. Leaders measure across three categories:

Technical Metrics

- mean time to detection

- mean time to resolution

- percentage of automated responses

- regression frequency

- error budget burn

Org and Productivity Metrics

- reduction in on call pages

- reduction in after hours work

- reduction in triage time

- increase in engineering time spent on roadmap

Business Metrics

- revenue protected from outages

- customer satisfaction during incidents

- infrastructure cost optimization

- feature velocity due to reduced firefighting

CTOs increasingly evaluate AI SRE as a force multiplier rather than a cost center.

The Future of AI Driven Reliability

The next wave of AI SRE moves from assisted remediation to closed loop infrastructure.

We anticipate several trajectories:

- Autonomous Reliability Meshes - Services negotiate resources, adjust topology, and apply resilience heuristics.

- Proactive Optimization - Systems tune themselves before failure manifests.

- Cross Organizational Learning - Incident data will be anonymized and shared across platforms, creating a reliability knowledge commons.

- Developer Workflow Integration - Reliability feedback will surface during code review rather than incident review.

The long arc points toward production systems that degrade gracefully and repair themselves without paging a human.

Conclusion

The AI SRE is a shift in how we think about running production. By combining the pattern-recognition power of AI with the hard-earned practices of site reliability engineering, we get systems that don’t just alert on problems but can help resolve them.

Sure, the tech is still maturing. There are limitations, edge cases, and plenty of integration work ahead. But the teams that start experimenting now will be ahead of the curve when more advanced capabilities land.

Success with AI SRE doesn’t come from flipping a switch. It takes thoughtful rollout, tight integration with your workflows, feedback loops that keep improving the system, and a team that understands how to work with their new AI capabilities.

The future of reliability is intelligent, proactive, and collaborative. And the sooner you start that journey, the sooner your team can spend less time firefighting, and more time shipping great things.

Curious where to begin? Start by mapping out your biggest operational headaches and identifying the workflows where automation can create real leverage. That is where AI SRE starts to earn its place on the team. At Rootly, we help organizations do exactly that and make the rollout practical.

Ready to explore it? Book a demo.